Google Rolls Out Its Bard Chatbot to Battle ChatGPT

GOOGLE ISN’T USED to playing catch-up in either artificial intelligence or search, but today the company is hustling to show that it hasn’t lost its edge. It’s starting the rollout of a chatbot called Bard to do battle with the sensationally popular ChatG

Bard, like ChatGPT, will respond to questions about and discuss an almost inexhaustible range of subjects with what sometimes seems like humanlike understanding. Google showed WIRED several examples, including asking for activities for a child who is interested in bowling and requesting 20 books to read this year.

Bard is also like ChatGPT in that it will sometimes make things up and act weird. Google disclosed an example of it misstating the name of a plant suggested for growing indoors. “Bard’s an early experiment, it's not perfect, and it's gonna get things wrong occasionally,” says Eli Collins, a vice president of research at Google working on Bard.

Google says it has made Bard available to a small number of testers. From today anyone in the US and the UK will be able to apply for access.

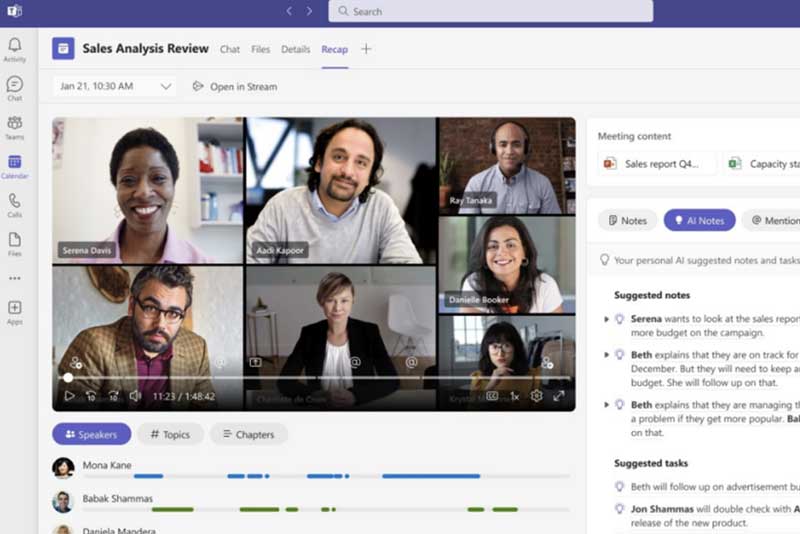

The bot will be accessible via its own web page and separate from Google’s regular search interface. It will offer three answers to each query—a design choice meant to impress upon users that Bard is generating answers on the fly and may sometimes make mistakes.

Google will also offer a recommended query for a conventional web search beneath each Bard response. And it will be possible for users to give feedback on its answers to help Google refine the bot by clicking a thumbs-up or thumbs-down, with the option to type in more detailed feedback.

Google says early users of Bard have found it a useful aid for generating ideas or text. Collins also acknowledges that some have successfully got it to misbehave, although he did not specify how or exactly what restrictions Google has tried to place on the bot.

Bard and ChatGPT show enormous potential and flexibility but are also unpredictable and still at an early stage of development. That presents a conundrum for companies hoping to gain an edge in advancing and harnessing the technology. For a company like Google with large established products, the challenge is particularly difficult.

Both the chatbots use powerful AI models that predict the words that should follow a given sentence based on statistical patterns gleaned from enormous amounts of text training data. This turns out to be an incredibly effective way of mimicking human responses to questions, but it means that the algorithms will sometimes make up, or “hallucinate,” facts—a serious problem when a bot is supposed to be helping users find information or search the web.

ChatGPT-style bots can also regurgitate biases or language found in the darker corners of their training data, for example around race, gender, and age. They also tend to reflect back the way a user addresses them, causing them to readily act as if they have emotions and to be vulnerable to being nudged into saying strange and inappropriate things.

Google AI researchers invented several key innovations that went into the creation of ChatGPT. They include the type of machine-learning algorithm, known as a transformer, that was used to build the language model behind ChatGPT.

Google first demonstrated a chatbot built with these technologies in 2020 but chose to proceed cautiously, especially after one of its engineers triggered a storm of media coverage by arguing that a language model he was working on could be sentient. The fast-tracking of Bard shows how the excitement and hype around ChatGPT has jolted the company into taking more risks.

In February, shortly after investing $10 billion into ChatGPT’s creator OpenAI, Microsoft launched a conversational interface to its search engine Bing using the technology. China’s Baidu announced its own Ernie Bot earlier this month.

The race to develop and commercialize the technology seems to be accelerating. Last week OpenAI announced an improved version of the language model behind ChatGPT, called GPT-4. Google announced that it would make a powerful language model of its own, called PaLM, available for others to use via an API, and add text generation features to Google Worksplace, its business software. And Microsoft showed off new features in Office that make use of ChatGPT.

Collins of Google says one reason the company is launching Bard now, when the bot is far from perfect, is because of the valuable data generated when people interact with the system. OpenAI and Microsoft already have that streaming in after their own launches. “Human feedback is a really important part of why we're launching Bard,” Collins says. “We want to broaden [it] beyond what we've gotten internally.”

“Google’s core existence has been threatened by Microsoft,” says Aravind Srinivas, cofounder and CEO of Perplexity AI, a search startup that is using technology like that at work in ChatGPT and Bard.

Perplexity’s own product does not have a chat-style interface, a design choice aimed at avoiding giving users the feeling of being in dialog with another intelligent being. Srinivas says giving Bard the capacity to speak like a person is “risky,” because it may mislead and confuse users.

And while he expects tools like Bard to improve dramatically in the coming years, he says Google is gambling that it won’t suffer reputational damage in the short term when Bard gets things wrong. “People may no longer take it for granted that Google is always right,” he says. “It’s very tricky for them.”